- Blog

- Parasite city game mother

- Dell rapid recovery powershell hyper v export

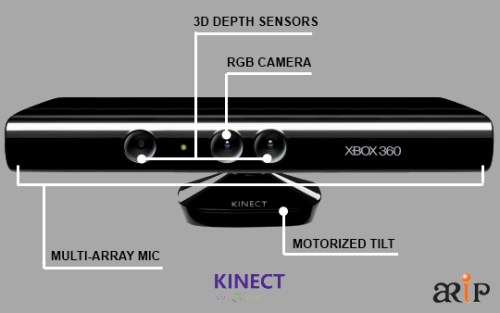

- Kinect max msp

- Mastercam x8 no sim found crack

- 108 names of lord vishnu in tamil pdf

- Greddy emanage blue map sensor

- Smoky mountain gold-ruby mine

- Angry birds seasons 4-1-0 crack

- Comisario montalbano online italiano

- Beyblade v force episodes in hindi

- Spb tv

- Ducar generator engine

- Adobe indesign cc 2018 by against clock

- Kedarnath movie cast and crew

- Movavi video converter 7 key

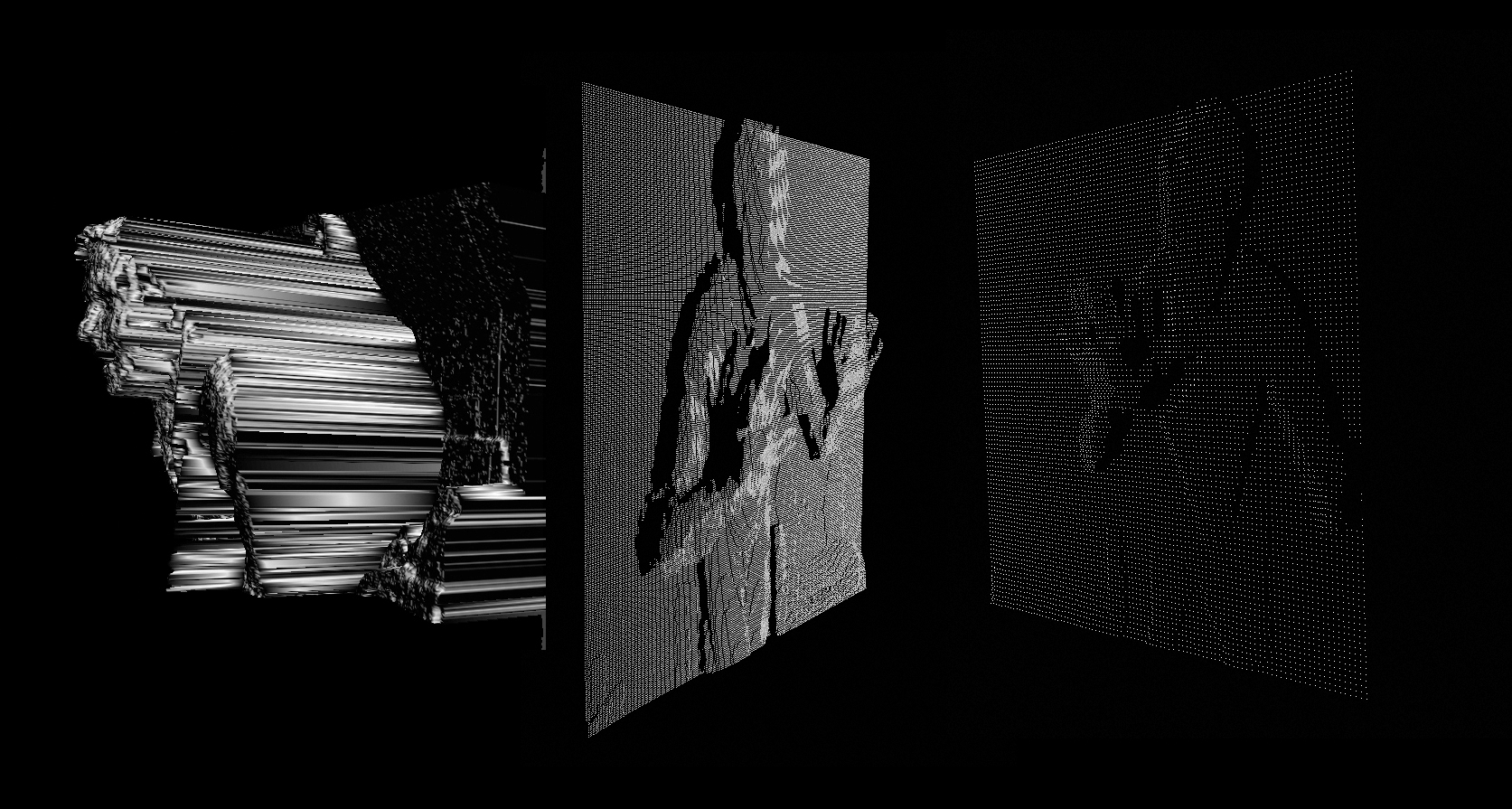

The result: audiovisual control, and The Future. Max/MSP (I believe here just translating OpenNI control to MIDI and perhaps DMX, as well)

Kinect max msp drivers#

OpenNI, the “natural interface” not-for-profit standards body and organization that allows drivers across multiple hardware (Kinect being the best-known) I could say more, but I’ll let you watch the video and ponder. (DMX is a protocol similar to MIDI – though actually a bit simpler, if you can believe that – generally associated with lighting and show control.) The lasers in question are a rig by Henry Strange, which allows computer control of laser direction using the DMX protocol.

Kinect max msp plus#

It was a brilliant three days of straddling between the digital and physical world and exploring art and interactive lands.Matt Davis is seen here with Microsoft’s Kinect computer vision / 3D camera controller, plus – stealing the show – lasers. Whatever the preferred mode of interaction was for the child, the system provided an outlet for their creative ventures. The parents seem to really see the value in being able to contribute to the system. The actual presentation went fantastic and the kids really owned the system and the content with very little input from us. We also loaded up all the new media for them to show their parents and incorporate into the games. There were some rehearsals with the system and new jobs. Who would control the webcam, who would announce things, who would do sound effects and so on.

The children had pre-selected which area they wanted to work in and assigned jobs to each other and themselves. One of the things that was very impressive was their ability to master moving through different scales and with different setups, to highlight different media and extend the maps that had been created.ĭay three saw a move back to the main hall and a final hunkering down of roles. They discussed bringing all the different content and created components together to form a game whilst getting to grips with the system. The children started to think about how they could get their parents involved in the process and demonstrate, not only the system to them, but also all the content that they had created. We also developed some new media to be added to the PUP system, both in terms of getting crafty, and taking photos of the exhibition to put in the system, alongside photos of the children’s items from home. The idea of the mazes developed further as some of the children had made new mazes overnight, and they had also bought in objects from home. This gave the children the chance to really explore how they were going to use the different elements available and they were very forthcoming with ideas.ĭay two moved to a different room.

This involved a maze being drawn and then captured by the webcam operator, a player who moved the physical piece around the maze (Mario kart or army man), and a technologist to control the PUP system on the iPad. There were some great interactions of children creating a maze in the afternoon. They were using the webcam alongside physical props to mix digital and physical elements. This carried on for the rest of the morning with different children exploring the different functionality of the system, at times switching roles and directing each other regarding how they were using the system.

They immediately began interacting with the screen. They were introduced to the separate elements but descended into creative chaos when the Kinect part of PUP was activated. Interactive Animation by Caleb Shuey Art & Technology Exhibition Autumm 2013. The workshop aimed to use PUP to help a small group of home-educated children, aged 7-10, interact with the art exhibition in the gallery by Mark Hamilton.ĭay one was spent unleashing the system on the children. Max MSP Jitter Kinect animation Creating an Interactive animation using Max Msp Jitter and video playback files. DotLib took the Pop-Up-Play system to the Embrace Arts Centre in Leicester for a three-day workshop event run by Marianne Pape.